On November 25, 2019 the National Transportation Safety Board issued its final report on the March 2018 fatality accident in Tempe, Arizona, involving an autonomous vehicle and a pedestrian. NTSB’s position on the accident is that it came about because of a combination of an “inadequate safety culture” at the developer and “automation complacency,” which it describes as the failure of the human safety driver to “monitor an automation system for its failures.”

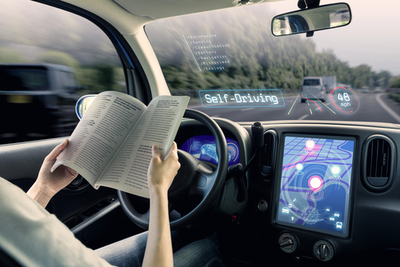

It doesn’t take a deep dive into news reports about AVs to see that many developers envision AVs as systems in which vehicle occupants are entirely “automation complacent”—watching movies, playing video games, doing business or otherwise ignoring the outside world. Everybody from BMW to IKEA has presented concepts for AV interiors, involving everything from social media feeds or e-mails on vehicle windows to projections of movies or video games on the windshield. Add in noise-cancellation technology, lighting and temperature control (not to mention alcohol) and the AV is designed to induce “complacency,” exactly what NTSB criticized in this accident as well as the Tesla Autopilot crashes in Florida and Culver City, California: “driver inattention and overreliance on vehicle automation.” As Hamlet reminds us, “there’s the rub.”

NTSB’s vision of a safe AV environment appears to require an attentive, fully engaged safety driver, ready at all times to take command of the vehicle when it encounters a malfunction or processing error. (Recall that NTSB described one problem in the Tempe crash as “the system’s inability in this crash to correctly classify and predict the path of the pedestrian crossing the road midblock.” I.e., the software did not recognize what the pedestrian with the bicycle was.) What makes this finding the most difficult is that it does not appear to be the kind of error that would be brought to the attention of the putative safety driver in time to take action, assuming that the driver is not otherwise “complacent.” How will the driver know that the automated system is not responding? Or not responding fast enough? Or just plain confused?

Following this thread leads to the conclusion that there must be a driver at all times, perhaps two; NTSB commended Uber for “postcrash inclusion of a second vehicle operator during testing of the ADS, along with real-time monitoring of operator attentiveness” as a step forward. Two drivers? If there is to be a driver, what controls must be at the driver’s disposal? Presumably these include devices to steer the vehicle, stop it and also to assess what the problem with the automated systems is. When must the vehicle warn the driver, recognizing that studies show reaction time from “complacency” to action is slow? It doesn’t take much more to conclude that the safety driver must always be engaged because of possible system failures or software glitches. All of which is prudent but entirely at odds with a vehicle environment where developers hope everyone can play Fortnite during the morning commute.

There are deeper questions implicit in this: how safe is safe enough to allow an AV’s occupants to be complacent? What failure rate of system glitches, sensor suite problems, confusion or general bad stuff is low enough to allow automated operation without requiring human control? This is not the “trolley problem.” It is one step earlier: the point at which the vehicle recognizes (or doesn’t) that a problem exists. How does the vehicle know when the trolley problem is here? Finally, how does this mindset affect driverless pilot programs already deployed or business models based on driverless ride hailing services? AV 4.0, rolled out at the 2020 CES, leaves such questions to voluntary standards; NTSB’s report on Tempe does not.

These are difficult questions of risk management and, therefore, of insurance. How much risk is too much to bear… or share? Neither the NTSB Tempe Report nor AV 4.0 give us the answers.

To sign up for The Open Road: Automotive Law Blog e-mail updates, please click here.